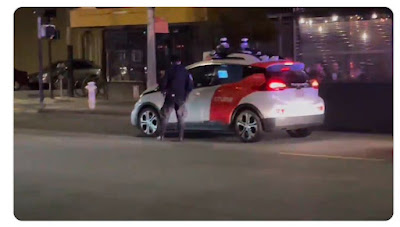

In April 2022 San Francisco police pulled over an uncrewed Cruise autonomous test vehicle for not having its headlights on. Much fun was had on social media about the perplexed officer having to chase the car a few meters after it repositioned during the traffic stop. Cruise said it was somehow intentional behavior. They also said their vehicle "did not have its headlights on because of a human error" (Source: https://www.theguardian.com/technology/2022/apr/11/self-driving-car-police-pull-over-san-francisco)

The traffic behavior indicates that Cruise needs to do better making it easier for local police to do traffic stops -- but that's not the main event. The real issue here is the headlights being off.

Cruise said in a public statement: "we have fixed the issue that led to this." Forgive me if I'm not reassured. A purportedly completely autonomous car (the ones that are supposed to be nearly perfect compared to those oh-so-flawed human drivers) that always drives at night didn't know to turn its headlights on when driving in the dark. Seriously? Remember that headlights on at night is not just for the AV itself (which might not need them in good city lighting with a lidar etc.) but also to be visible to other road users. So headlights off at night is a major safety problem. (Headlights on in day is also a good idea -- at least white forward-facing running lights, which this vehicle also did not seem to have on.)

Cruise says these vehicles are autonomous -- no human driver required. And they only test at night, so needing headlights can hardly be a surprise. But they got it wrong. How can that be?

This can't just be hand-waved away. Something really fundamental seems broken, and it is just a question of what it is.

The entire AV industry, including Cruise, has a history of extreme secrecy and in particular lack of transparency with safety. So we can only speculate. Here are the possibilities I can think of. None of them inspire confidence in safety, and they tend to get worse as we go down the list.

- The autonomy software had a defect that didn't turn headlights on at night. Perhaps, but seems unlikely. That would (one assumes) affect the entire fleet. And there should be software checks to make sure the headlights turn on as a safety check built into the software. If true, this never should have slipped through quality control let alone a rigorous safety critical software design process, and indicates significant concerns with overall software safety.

- The headlight switch is supposed to be on at all times. Many (most?) vehicles have "smart" lights. You can turn them off if you want, but in practice you just turn it to "on" and leave it there for months or years and the headlights just do the right thing, switching from daytime running lights to full on automatically, and turning off when the vehicle does. If you're in urban San Francisco high beams are unlikely to be relevant. So the autonomy doesn't mess with the lights at all. Except -- why does the software not check to see that the vehicle condition is safe in terms of headlights? Seems like a design oversight. How did a hazard like this get missed in hazard analysis? If this is the situation, this really calls into question whether the hazard analysis was done properly for the software. Even if this was fixed, what else did hazard analysis miss?

- 2a) A passenger in the vehicle turned the headlights off as a prank. If this is possible, even more important for this to be called out for software monitoring in the hazard analysis. But the check for headlights off obviously isn't there now.

- The software is completely ignorant of headlight state, and there is a maintenance tech who is supposed to turn the lights "on" as part of the check-out process each day to prepare the vehicle to run. This manual headlight-on check didn't get done. This is a huge issue with the Safety Management System, because if they missed that check what other checks did they miss? There are plenty of things to check on these vehicles for safety (e.g., sensor calibration, sensor cleaning/cleaner systems, correct software configuration). If they forget to turn on the headlight switch, what else did they forget? While this might be a "within normal tolerance" deviation, given lack of safety transparency Cruise doesn't get the benefit of the doubt. A broken SMS puts all road users at risk. This is a big deal unless proven otherwise. Firing, training, or having a stern talk with the human who made the "error" won't stop it from happening again. Blaming the driver/pilot/human never really does.

- There is no SMS. That's basically how Uber ATG ended up killing a pedestrian in Tempe Arizona. If this is the case, it is even scarier.

Kyle Vogt

CEO at Cruise

I’d like to respectfully disagree with your characterization of this event. Allow me to provide some context.

We apply SMS rigorously across the company, which as you probably know includes estimating the safety risk for known hazards and having a process to continuously surface new ones.

We then apply resources accordingly to attempt to reduce the overall safety risk of our service as effectively as possible. This is a continuous process and we will continue even though we’ve passed the point where we can operate without backup drivers.

Rarely would this direct us to focus on things that are extremely unlikely to result in injury, such as lack of headlights on a single vehicle for a short duration of time. We have a process in place to ensure they are functioning properly, but the safety impact of that process failing is low.

Our use of SMS directs the majority of our resources towards higher risk areas like pedestrian and cyclist interactions. These are far more complex and of higher potential severity.

I firmly believe that’s this is the right approach, even if it means our vehicles occasionally do something that seems obviously wrong to a human.

@Kyle Vogt: Thank you for responding to my post. I am glad to hear that you have a Safety Management System in place now. This is excellent news.

Hopefully your SMS folks are telling you that the headlight-off incident should be treated as a serious safety process issue rather than being sloughed off as a "no big deal." A healthy safety culture would acknowledge this is a process failure that needs to be corrected rather than making excuses based on "safety impact of that process failing is low." Process failures in safety critical system operations are a problem -- period. An attitude that less critical processes don't matter is easily toxic for safety culture. Safety culture is how you do business, and if disregarding procedures that are seen as less than the most critical is how you do business, eventually that will catch up with you resulting in loss events. More simply: discounting a low severity process failure is at odds with your statement that you "apply SMS rigorously across the company."

I would say that headlights off has a substantive potential severity for other road users because they are signaling/warning devices to help people know to get out of the way if your vehicle should malfunction. For example, with headlights off you cannot take controllability credit for the other road user jumping out of your way if you fail to detect them, because they won't see headlights as an indicator of your approach at night. Moreover, having headlights on is the law.

Regardless of your statement that you are focusing on higher severity issues, a process failure for something as simple and obvious as making sure headlights are on at night is very disconcerting, and raises concerns over your safety process quality in general. A more transparent analysis of how that process failed and whether other higher severity process failures are occurring that have not been made public would be the right thing to do here to restore public trust. If this is the only time the headlights were off and your other pre-mission procedures have a high compliance rate, then OK, stuff happens. However -- if pre-mission procedures are hit or miss and you don't even keep track of procedural errors that don't result in a scary pedestrian near-miss, that is quite another. If you don't have the procedural metrics to show you are operating safely, then probably you aren't operating safely. Which is the case?

Your GM-issued VSSA from 2018 seems outdated and has no mention of an SMS. So it is difficult for the public to know what your plan is for safety. More transparency and communication regarding your safety process is essential to help build public trust.

I'm disappointed the cops seemed to minimize the importance of the headlights being off by failing to ticket the vehicle. Maybe the fines for illegal/unsafe AV operation need to be increased substantially to encourage car makers to take responsibility for their fully autonomous vehicles' safe operation. Traffic police need to be trained on how to respond to such incidents. At a minimum, I think they need some way of quickly identifying operating autonomously vehicles, and a means to bring them to a stop when unsafe operation occurs. It is standard practice in industry for dangerous machinery to be equipped with emergency-stop switches. Autonomous vehicles certainly qualify as potentially dangerous machinery.

ReplyDelete