Too often, I’ve read documents or listened to panel sessions that rehash misleading or just plain incorrect industry talking points regarding autonomous vehicle standards and regulations. The current industry strategy seems to boil down to “Trust us, we know what’s best,” “Don’t stifle innovation,” and “Humans are bad drivers, so computers will be better.”

As far as I can tell, what’s really going on is that automated-vehicle companies are saying what they say both to avoid being regulated and to avoid having to follow their own industry safety standards. That strategy has not yielded long-term safety in other industries that have tried it, however, and I predict that in the long term it will not serve the automotive industry well either. It certainly does not encourage trust.

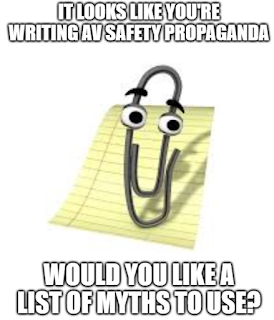

In this essay, I address the usual industry talking points and provide summary rebuttals. I’m intentionally simplifying and generalizing each talking point for clarity. It is my hope that other stakeholders, policymakers, and regulators can use this information to encourage AV companies to talk about the things that matter, such as ensuring the safety of all road users. We need more transparency and honest discussion — not a continuation of the current, empty rhetoric.

It is important to be clear that, from everything I’ve seen, the rank-and-file engineers, and especially the safety professionals — if the company has any — are trying to do the right thing. It is the government relations and policy people, not the engineers, who are providing the facile talking points. And it is the high-level managers — the ones who set budgets, priorities, and milestones — who affect whether safety teams have sufficient resources and authority to build an AV that will in fact be acceptably safe. So this essay is directed at them, not at the engineers.

For more detailed guidance to state and municipal DOT and DMV regulators, see this blog post.

(I've numbered these points for easier reference. Making yourself a bingo card and bringing it to the next regulatory/policy panel session you attend to keep score is optional.)

- Myth #1: 94% of crashes are due to human driver error, so AVs will be safer.

- Myth #2: You can have either innovation or regulation, not both.

This is a false dichotomy. You can easily have both innovation and regulation, if the regulation is designed to permit it.

To consider a simple example, you could regulate road testing safety by requiring conformance to SAE J3018. That standard is all about making sure that the human safety driver is properly qualified and trained. It also helps ensure that testing operations are conducted in a responsible manner consistent with good engineering validation and road safety practices. It places no constraints on the autonomy technology being tested. (Adaptation would be needed for a safety driver in a chase car if it was deemed impractical to have a safety driver sit in a prototype vehicle for road testing; see this blog post.)

For more general approaches, you can switch from the current approach of track and road testing to more goal-based testing. For example, a regulation that tells you what symbol to put on a dashboard to tell the driver sitting in a driver seat that there is low tire pressure (Federal Motor Vehicle Safety Standard [FMVSS] 138) does indeed constrain design by requiring a light, a dashboard, a driver seat, and a driver. But the lighted symbol isn’t the point; getting the low tire pressure addressed is the point, and regulations can focus on that instead (see this blog post). To be sure, this requires a change in regulatory structure. But the choice isn’t between innovation and regulation; it is between old regulation and new regulation, and that is a far different matter — especially if the new elements of the regulatory approach are based on conforming to standards the industry itself wrote.

The primary industry standards for deployed AVs in the U.S. are ISO 26262 (functional safety), ISO 21448 (safety of the intended function, or SOTIF), and ANSI/UL 4600 (system-level safety). Indeed, the current US DOT proposal for regulation as of this writing is to get the AV industry to conform to precisely those standards. None of the standards stifle innovation. Rather, they promote a level playing field so companies can’t skimp on safety to gain a competitive timing advantage while putting other road users at undue risk.

If a company states that safety is their #1 priority, how can that possibly be incompatible with regulatory requirements to follow industry consensus safety standards written and approved by the industry itself?

- Myth #3: There are already sufficient regulations in place (for example in California).

Existing regulations (with one exception) do not require conformance to any industry computer-related safety standard, and do not set any level of required safety. At worst, it is the "Wild West." At best, there are requirements for driver licensing, insurance, and reporting. But requirements on assuring safety, if any, are little more than taking the manufacturer's word for it.

The one exception is the New York City DOT’s rule to require the SAE J3018 road testing safety standard, and to attest that road testing will not be more dangerous than a normal human driver. (See: https://rules.cityofnewyork.us/rule/autonomous-self-driving-vehicles/ . For bonus points, see if you can find any of these myths in the comments submitted in response to that standard, or in responses to the DOT proposal referenced under Myth #2.)

- Myth #4a: We don't need proactive AV regulation because of existing safety regulations.

The current Federal Motor Vehicle Safety Standard (FMVSS) regulations do not cover computer-based functionality safety. They are primarily about whether brakes work, whether headlights work, tire pressure, seat belts, airbags, and other topics that are basic safety building blocks at the vehicle behavior level. As the National Highway Traffic Safety Administration would tell you, merely passing FMVSS mandates is not enough to ensure safety on its own; it is simply a useful and important check to weed out the most egregious safety problems based on experience.

There are no FMVSS or other regulatory requirements for automotive software safety in general, let alone for AV-specific software safety.

- Myth #4b: We don’t need proactive regulation because of liability concerns and NHTSA recalls.

The National Highway Traffic Safety Administration generally operates reactively to bad events. Sometimes, car companies voluntarily disclose a problem. Other times, a number of people have to die or be seriously injured before NHTSA forces some action (for example, eleven crashes involving Tesla driver assistance "autopilot" with emergency vehicles occurred over a 3.5-year period before action was initiated, with an expectation of many months to resolution). For mature technology, maybe this is OK — if one makes the assumption that the industry is populated with only good faith actors. But even with that assumption, it isn’t enough for immature AV technology and manufacturers new to automotive safety.

Aircraft safety regulation used to wait for crashes, but air travel got a lot safer when FAA and airlines became proactive. Most importantly, a regulatory policy that waits for loss of life and limb before taking action can result in a process that takes years to solve problems, even as the loss events continue.

- Myth #5: Existing safety standards aren't appropriate because (pick one or more):

- they are not a perfect fit;

- no single standard applies to the whole vehicle;

- they would reduce safety because they prevent the developer from doing more;

- they would force the AV to be less safe;

- they were not written specifically for AVs.

These statements misrepresent how the real standards work. ISO 26262, ISO 21448, and ANSI/UL 4600 all permit significant flexibility to be used in a way that makes sense. All three work together to fit any safe AV.

ISO 26262 can apply to any light vehicle on the road for the parts that aren’t the machine-learning–based mechanisms. Cars still have motors, brakes, wheels, and other non-autonomous features that have to be safe. The hardware on which the autonomy software runs can still conform to ISO 26262. All of these are covered by ISO 26262, and the standard specifically permits extension to additional scope.

ISO 21448 is explicitly scoped for AVs in addition to ADAS. Its origin story includes being proposed as an addition to ISO 26262, and it is written to be compatible with that standard.

ANSI/UL 4600 is specifically written for AVs. It applies to the whole vehicle as well as support infrastructure. The voting committee includes experts on ISO 26262 and ISO 21448, so it is compatible with those standards, and in fact it leads naturally to using all three of these standards and not just a “single” standard. (Anyone who knows standards knows it is improbable that any safety-critical system would involve use of just a single standard.)

There is no reason not to conform to these standards, and U.S. DOT has already proposed this set for the United States. All of these standards allow developers to do more than required. All of them are flexible enough to accommodate any AV. None of them force a company to be less safe (really, that argument is laughable). None of the standards constrain the technical approach used.

- Myth #6: Local and state regulations need to be stopped to avoid a “patchwork” of regulations that inhibits innovation.

- Myth #7: We conform to the “spirit” of ISO 26262, etc.

AV developers typically justify their “in the spirit of” statements by advancing the theory that there might be a need for deviation from the standard (beyond any deviations that the standards already permit). The statements never specify what the possibly required deviations might be, and I’ve never heard a concrete example at any of the many standards meetings I have attended. (I’m on the U.S. voting committees for all the standards listed in this essay.)

I’ve never heard an AV company argue, when making its case, that it conforms to the intent of a standard, just to the spirit of the standard — whatever that means. Indeed, any “in the spirit” statement is meaningless, because the standards I’ve mentioned are all flexible enough that if you actually conform to the spirit and intent of the standard, you can conform to the standard. The standards explicitly permit not doing things that don’t apply and deviating from inapplicable clauses with appropriate decision processes. Doing that still permits conformance to the actual standard.

I worry that AV companies’ “spirit” claims are really code for cutting corners on safety when they think it is economically attractive to do so, or they’re in a hurry, or both.

A reasonable alternative explanation is simply that lawyers might want to avoid committing to something if nobody is forcing them to do so. That is understandable from their point of view, but it impairs transparency. The dark side of the strategy is that it provides cover for companies that are not the best actors to hide cutting corners. If companies are worried that they’ll be called out for not following a standard after a crash, they should spend the resources to actually follow the standard. Or they should not spend so much effort making public claims about safety being their top priority.

- Myth #8: Government regulators aren’t smart enough about the technology to regulate it, so there should be no regulations. Industry is smarter and should just do what it thinks is best.

Following the proposed U.S. DOT plan to invoke the industry standards mentioned earlier makes sense, because it addresses precisely this concern. Even two years ago, the standards weren’t really there, but now they are. Industry decided which standards made sense, and then they created them.

If we could trust industry — any industry — to self-police safety in the face of short-term profit incentive and organizational dysfunction, we wouldn’t need regulators. But that isn’t the real world. Trusting the automotive industry to regulate development with immature, novel technology is unlikely to work. It’s possible, and important, for the industry to achieve a healthy balance between taking responsibility for safety and accepting regulatory oversight. Near-zero regulatory influence until after the crashes start piling up is not the right balance.

Near-zero regulatory influence until after the crashes start piling up is not the right balance.

- Myth #9: Disclosing testing data gives away the secret sauce for autonomy.

Road testing safety is all about whether a human safety driver can effectively keep a test vehicle from creating elevated risk for other road users. That has nothing to do with the secret-sauce autonomy intellectual property. It is about the effectiveness of the safety driver.

Companies sometimes say it would be too difficult or expensive to get or provide data. If companies don’t have data to prove that they are testing safely, they shouldn’t be testing. If they think that providing testing safety data is too expensive, they can’t afford the price of admission for using public roads as a testbed.

Testing data need not include anything about the autonomy design or performance. An example would be revealing how often test drivers fall asleep while testing. A non-zero result might be embarrassing, but how does that divulge secret autonomy technology data?

By the same token, regulators should not be asking for autonomy performance data such as how often the system “disengages” because of an internal fault or software issue. They should be asking how often other road users are placed at risk, which is an entirely different story. So the miles and locations tested, along with the collisions and near-miss situations that occur, make sense as measures of public exposure to risk. But metrics related to the quality of the autonomy itself do not, unless and until that data is being used to justify testing without a safety driver.

- Myth #10: Delaying deployment of AVs is tantamount to killing people.

- Myth #11: We haven't killed anyone, so that must mean we are safe.

- Myth #12: Other states/cities let us test without any restrictions; you should too.