The tragic Tesla Paris crash of December 2021 highlights a completely dysfunctional vehicle defect investigation process. This story is well done, but highlights the usual broken investigation narrative (not fault of reporters):

The Paris government says: "no indication" of a "technical fault"

Well, did they look at software as a potential cause? Almost certainly not. Does Tesla follow international software safety standards (e.g., ISO26262)? Probably not. So it is a least possible this is a software defect.

What kind of software defect you ask? Well what if a software defect misreports the position of the accelerator pedal? What if there is a botched redundancy pattern in electronic controls? What if flaky code turns on AP? Mistakes like this are in other production vehicles.

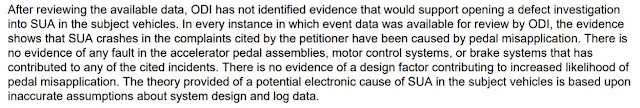

Well, NHTSA exonerated 200+ Teslas earlier this year, right? Read the report. NHTSA DID NOT CONSIDER SOFTWARE DEFECTS at all in their investigation. If you don't even look, you won't find it. Read it for yourself!

In fact, there is a multi-decade pattern of NHTSA ignoring potential software defects in investigations unless they are readily reproduced. Instead, if it isn't readily reproduced, it must be the human driver. Scholarly paper on this history here.

We still don't know what happened here. But if it is a software problem across multiple vehicles, we're not going to fix it by ignoring it. Historically, the industry and NHTSA blame the driver to avoid having to deal with software safety.

I hope the French can do better here.

I hear so often "no other cars have that type of defect," or "it's not possible software is that bad." That's why I compiled this list of 100+ dangerous software defects that have resulted in recalls (i.e., the ones companies actually fixed): https://betterembsw.blogspot.com/p/potentially-deadly-automotive-software.html

No comments:

Post a Comment

All comments are moderated by a human. While it is always nice to see "I like this" comments, only comments that contribute substantively to the discussion will be approved for posting.