The Tesla rolling stop discussion continues, with thoughtful people sometimes not sure which way to go on this. Here is a breakdown of the issues...

This is a big deal and should be treated as such:

- It's illegal. Ignoring the law until you get caught is untrustworthy behavior (in this case apparently part of a pattern). Tesla could have and should have worked this out with NHTSA in advance. Then lobbied for regulatory change if needed.

- Any rule-bending behavior needs to adjust to local conditions and context to be safe-ish, which wasn't done here. Local customs are relevant. Not doing it in school zones, near playgrounds. Etc.

- These vehicles are not yet competent drivers. They regularly try to hit vehicles and other things they detect (show as detected on screen -- see YouTube). Any statement that they will proceed only when conditions are clear ignores this severe issue that they clearly are not competent judges of when intersections are clear. (Safety driver being responsible from stopping running over a pedestrian is a ridiculous argument to build in dangerous behavior, but I know it will be made.)

- Even if you think rolling stops are fine (I disagree), the full stop is still necessary -- for the safety driver to have time to assess safety before going.

- Top end of 5.6 mph is jogging speed -- more like a yield sign -- it's not a 0.5 mph crawl. If the vehicle needs to creep out into the intersection for sight lines the procedure I remember from my driver training is very clear: first stop completely, then creep as necessary.

- They are removing agency from the human driver in deciding when to break the law. People selecting a "rolling stop" mode had no idea what they were really signing up for (true behaviors were only disclosed in recall)-- but were going to bear the full burden of any liability for hitting a bicyclist or child at an intersection. That is really wrong. Deciding to break the law on behalf of a driver in an opaque way without involving driver approval every time it is done is inherently a problem.

- Arguing you'll be safer because you don't make bad human driver choices and then emulating human drivers who bend rules is not a particularly convincing approach to building trust.

- This differs in kind from crossing a centerline to go around a disabled vehicle. Shaving a few seconds off a trip does not justify breaking traffic laws. There is no pressing no overriding utility of mission completion requirement to break that rule.

- Saying "people can break the law" is whataboutism. Huge difference between setting a law-breaking policy that sticks basically forever unless changed vs. instance-by-instance human choices to direct a machine to violate speed limit (or whatever) with that human driver being connected to the context the decision is being made in--and consequences. If they wanted to have the ability to do rolling stops they could have just had the driver tap the accelerator as the car slows down and used that as a basis for training for when regulations were relaxed to account for this behavior. (Not condoning breaking traffic laws -- but pointing out that there is an obvious alternative that engaged the safety driver in the process that was not used.)

- NHTSA has better things to do with its limited resources than play Whac-a-mole with Tesla. Tesla can flout rules faster than NHTSA has resources to enforce them. This appears to be just one incident in a larger campaign of that sort.

Sure, revisiting regulations to codify "do something reasonable" makes sense. I've been pointing this out as a challenge for years. But move fast and break things while unilaterally deciding what you can get away with is not the right approach to safety.

Reference AP article here: https://www.npr.org/2022/02/01/1077274384/tesla-recalls-autos-over-software-that-allows-them-to-roll-through-stop-signs

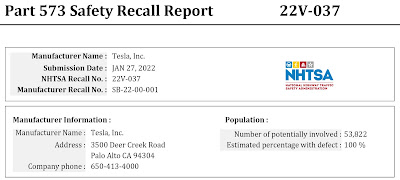

NHTSA Part 573 Safety Recall Report: https://static.nhtsa.gov/odi/rcl/2022/RCLRPT-22V037-4462.PDF

No comments:

Post a Comment

All comments are moderated by a human. While it is always nice to see "I like this" comments, only comments that contribute substantively to the discussion will be approved for posting.