Automated cars are unlikely to get rid of all the "94% human error" mishaps that are often cited as a safety rationale. But there is certainly room for improvement compared to human drivers. Let's sort out the hype from the data.

You've heard that the reason we desperately need automated cars is that 94% of crashes are due to human error, right? And that humans make poor choices such as driving impaired, right? Surely, then, autonomous vehicles will give us a factor of 10 or more improvement simply by not driving stupid, right?

Not so fast. That's not actually what the research data says. In the words of NTSB Chair Jennifer Homendy: It Ain't 94%!

It's important for us to set realistic expectations for this promising new technology. Probably it's more like 50%. Let's dig deeper.

(NEW: video explainer here 8 minutes https://youtu.be/pYb4X5aJhgU)

The US Department of Transportation publishes an impressive amount of data on traffic safety -- which is a good thing. And, sure enough, you can find the 94% number in DOT HS 812 115 Traffic Safety Facts, Feb. 2015. (https://crashstats.nhtsa.dot.gov/Api/Public/ViewPublication/812115) which says: "The critical reason was assigned to drivers in an estimated 2,046,000 crashes that comprise 94 percent of the NMVCCS crashes at the national level. However, in none of these cases was the assignment intended to blame the driver for causing the crash" (emphasis added). There’s the 94% number. But wait – they’re not actually blaming the driver for those crashes! We need to dig deeper here.

Before digging, it's worth noting that this isn't really 2015 data, but rather a 2015 summary of data based on a data analysis report published in 2008. (DOT HS 811 059 National Motor Vehicle Crash Causation Survey, July 2008 https://crashstats.nhtsa.dot.gov/Api/Public/ViewPublication/811059) If you want to draw your own conclusions you should look at the original study to make sure you understand things.

The US Department of Transportation publishes an impressive amount of data on traffic safety -- which is a good thing. And, sure enough, you can find the 94% number in DOT HS 812 115 Traffic Safety Facts, Feb. 2015. (https://crashstats.nhtsa.dot.gov/Api/Public/ViewPublication/812115) which says: "The critical reason was assigned to drivers in an estimated 2,046,000 crashes that comprise 94 percent of the NMVCCS crashes at the national level. However, in none of these cases was the assignment intended to blame the driver for causing the crash" (emphasis added). There’s the 94% number. But wait – they’re not actually blaming the driver for those crashes! We need to dig deeper here.

Before digging, it's worth noting that this isn't really 2015 data, but rather a 2015 summary of data based on a data analysis report published in 2008. (DOT HS 811 059 National Motor Vehicle Crash Causation Survey, July 2008 https://crashstats.nhtsa.dot.gov/Api/Public/ViewPublication/811059) If you want to draw your own conclusions you should look at the original study to make sure you understand things.

Now that we can look at the primary data, first we need to see what assigning a "crash reason" to the driver really means. Page 22 of the 2008 report sheds some light on this. Basically, if something goes wrong and the driver should have (arguably) been able to avoid the crash, the mishap “reason” is assigned to the driver. That's not at all the same thing as the accident being the driver's fault to due an overt error that directly triggered the mishap. To its credit that original report makes this clear. This means that the idea of crashes or fatalities being “94% due to driver error” differs from the report's findings in a subtle but critical way.

Indeed, many crashes are caused by drunk drivers. But other crashes are caused by something bad happening that the driver doesn't manage to recover from. Still other crashes are caused by the limits of human ability to safely operate a vehicle in difficult real-world situations despite the driver not having violated any rules. We need to dig still deeper to understand what's really going on with this report.

Page 25 of the report sheds some light on this. Of the 94% of mishaps attributed to drivers, there are a number of clear driver misbehaviors listed, including distracted driving, illegal maneuvers, and sleeping. But the #1 problem is "Inadequate surveillance 20.3%." In other words, a fifth of mishaps blamed on drivers are the driver not correctly identifying an obstacle, missing some road condition, or other problem of that nature. While automated cars might have better sensor coverage than a human driver's eyes, misclassifying an object or being fooled by an unusual scenario could happen with an automated car just as it can happen to a human. (In other words, sometimes driver blame could be assigned to an automated driver, even if part of the 94%.) This biggest bin isn’t drunk driving at all, but rather gets to a core reason of why building automated cars is so hard. Highly accurate perception is difficult, whether you're a human or a machine.

Other driver bins in the analysis include "False assumption of other's action 4.5%," "Other/unknown recognition error 2.5%," Other/unknown decision error 6.2%," and "Other/unknown driver error 7.9%". That’s another 21% that might or might not be impaired driving, and might be a mistake that could also be made by an automated driver.

So in the end, the 94% human attribution for mishaps isn't all impaired or misbehaving drivers. Rather, many of the reasons assigned to drivers sound more like imperfect drivers. It's increasingly clear that autonomous vehicles can also be imperfect. For example, they can misclassify objects on the road. So we can't blithely claim that automated cars won't have any of failures that the study attributes to human error. Rather, at least some of these problems will likely change from being assigned to "human driver error" to instead being "robot driver error." Humans aren't perfect. Neither are robots. Robots might be better than humans in the end, but that area is still a work in progress and we do not yet have data to prove that it will turn out in the robot driver's favor any time soon.

A more precise statement of the study's findings is that while it is indeed true that 94% of mishaps might be attributed to humans, significantly less than that number is attributable to poor human choices such as driving drunk. While I certainly appreciate that computers don't drive drunk, they just might be driving buggy. And even if they don’t drive buggy, making self driving cars just as good as an unimpaired driver is unlikely to get near the 94% number so often tossed around. Perception, modeling expected behavior of other actors, and dealing with unexpected situations are all notoriously difficult to get right, and are all cited sources of mishaps. So this should really be no surprise. It is possible automated cars might be a lot better than people eventually, but this data doesn't support that expectation at the 94% better level.

We can get a bit more clarity by looking at another DOT report that might help set more realistic expectations. Let's take a look at 2016 Fatal Motor Vehicle Crashes: Overview (DOT HS 812 456; access via https://www.nhtsa.gov/press-releases/usdot-releases-2016-fatal-traffic-crash-data). The most relevant numbers are below (note that there is overlap, so the categories add up to more than 100%):

I'm not going to attempt a detailed analysis, but certainly we can get the broad brush strokes from this data. I come up with the following three conclusions:

1. Clearly impaired driving and speeding contribute to a large number of fatalities (ballpark twenty thousand, although there are overlaps in the categories). So there is plenty of low hanging fruit to go after if we can create a automated vehicle that is as good as an unimpaired human. But it might be more like a 2x or 3x improvement than a 20x improvement. Consider the typical 100 million mile between fatality number that is quoted for human drivers. If you remove the impaired drivers, based on this data you get more like about 200 million miles between fatalities. It will take a lot to get automated cars that good. Don't get me wrong; a 2x or so improvement is potentially a lot of lives saved. But it's not zero fatalities, and it's nowhere near a 94% reduction.

2. Almost one-fifth of the fatalities are pedestrians, cyclists, and other at-risk road users. Detecting and avoiding that type of crash is notoriously difficult for autonomous vehicle technology. Occupants have all sorts of crash survivability features. Pedestrians -- not so much. Ensuring non-occupant safety has to be a high priority if we're going to deploy this technology and avoid an unintended consequence of a rise in pedestrian fatalities potentially offsetting gains in occupant safety.

3. Well over a quarter of the vehicle occupant fatalities are attributed to not wearing seat belts. Older generations have lived through the various technological attempts to enforce seat belt use. (Remember motorized seat belts?) The main takeaway has been that some people will go to extreme lengths to avoid wearing seat belts. It's difficult to see how automated car technology alone will change that. (Yes, if there are zero crashes the need for seat belts is reduced, but we're not going to get there any time soon. It seems more likely that seat belts will play a key part in reducing fatalities when coupled with hopefully less violent and less frequent crashes.)

So where does that leave us?

There is plenty of potential for automated cars to help with safety. But the low hanging fruit is more likely cutting fatalities perhaps in half if we can achieve parity with an average well behaved human driver. The 94% number so often quoted will take a lot more than that. Over time, hopefully, automated cars can continue to improve further. ADAS features such as automatic emergency braking can likely help too. And for now, even getting rid of half the traffic deaths is well worth doing, especially if we make sure to consider pedestrian safety.

Driving safely is a complex task for man or machine. It would be a shame if a hype roller coaster ride triggers disillusionment with technology that can in the end improve safety. Let's set reasonable expectations so that automated car technology is asked to provide near term benefits that it can actually deliver.

Update: Mitch Turk pointed out another study from 2016 that is interesting.

http://www.pnas.org/content/pnas/113/10/2636.full.pdf

This has data from monitoring drivers who experienced crashes. The ground rules for the experiment were a little different, but it has data explaining what was going on in the vehicle before a crash. A significant finding is that driver distraction is an issue. (Note that this data is several years later than the previous study, so that makes some sense.)

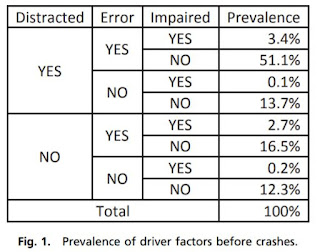

For our purposes an interesting finding is that 12.3% of crashes were NOT distracted/NOT Impaired/NOT Human Error:

Beyond that, it seems likely that some of the other categories contain scenarios that could be difficult for an AV, such as undistracted, unimpaired errors (16.5%).

Update 7/18/2018: Laura Fraade-Blanar from RAND send me a nice paper from 2014 on exactly this topic:

https://web.archive.org/web/20181121213923/https://www.casact.org/pubs/forum/14fforum/CAS%20AVTF_Restated_NMVCCS.pdf

The study looked at the NMVCSS data from 2008 and asked the question of what this data means for autonomy, accounting for issues that autonomy is going to have trouble addressing. They say that "49% of accidents contain at least one limiting factor that could disable the [autonomy] technology or reduce its effectiveness." They also point out that autonomy can create new risks not present in manually driven vehicles.

So this data suggests that eliminating perhaps half of autonomous vehicle crashes is a more realistic goal.

NOTES:

Indeed, many crashes are caused by drunk drivers. But other crashes are caused by something bad happening that the driver doesn't manage to recover from. Still other crashes are caused by the limits of human ability to safely operate a vehicle in difficult real-world situations despite the driver not having violated any rules. We need to dig still deeper to understand what's really going on with this report.

Page 25 of the report sheds some light on this. Of the 94% of mishaps attributed to drivers, there are a number of clear driver misbehaviors listed, including distracted driving, illegal maneuvers, and sleeping. But the #1 problem is "Inadequate surveillance 20.3%." In other words, a fifth of mishaps blamed on drivers are the driver not correctly identifying an obstacle, missing some road condition, or other problem of that nature. While automated cars might have better sensor coverage than a human driver's eyes, misclassifying an object or being fooled by an unusual scenario could happen with an automated car just as it can happen to a human. (In other words, sometimes driver blame could be assigned to an automated driver, even if part of the 94%.) This biggest bin isn’t drunk driving at all, but rather gets to a core reason of why building automated cars is so hard. Highly accurate perception is difficult, whether you're a human or a machine.

Other driver bins in the analysis include "False assumption of other's action 4.5%," "Other/unknown recognition error 2.5%," Other/unknown decision error 6.2%," and "Other/unknown driver error 7.9%". That’s another 21% that might or might not be impaired driving, and might be a mistake that could also be made by an automated driver.

So in the end, the 94% human attribution for mishaps isn't all impaired or misbehaving drivers. Rather, many of the reasons assigned to drivers sound more like imperfect drivers. It's increasingly clear that autonomous vehicles can also be imperfect. For example, they can misclassify objects on the road. So we can't blithely claim that automated cars won't have any of failures that the study attributes to human error. Rather, at least some of these problems will likely change from being assigned to "human driver error" to instead being "robot driver error." Humans aren't perfect. Neither are robots. Robots might be better than humans in the end, but that area is still a work in progress and we do not yet have data to prove that it will turn out in the robot driver's favor any time soon.

A more precise statement of the study's findings is that while it is indeed true that 94% of mishaps might be attributed to humans, significantly less than that number is attributable to poor human choices such as driving drunk. While I certainly appreciate that computers don't drive drunk, they just might be driving buggy. And even if they don’t drive buggy, making self driving cars just as good as an unimpaired driver is unlikely to get near the 94% number so often tossed around. Perception, modeling expected behavior of other actors, and dealing with unexpected situations are all notoriously difficult to get right, and are all cited sources of mishaps. So this should really be no surprise. It is possible automated cars might be a lot better than people eventually, but this data doesn't support that expectation at the 94% better level.

We can get a bit more clarity by looking at another DOT report that might help set more realistic expectations. Let's take a look at 2016 Fatal Motor Vehicle Crashes: Overview (DOT HS 812 456; access via https://www.nhtsa.gov/press-releases/usdot-releases-2016-fatal-traffic-crash-data). The most relevant numbers are below (note that there is overlap, so the categories add up to more than 100%):

- Total US roadway fatalities: 37,461

- Alcohol-impaired-driving fatalities: 10,497

- Unrestrained passenger vehicle fatalities (not wearing seat belts): 10,428

- Speeding-related fatalities: 10,111

- Distraction-affected fatalities: 3,450

- Drowsy driving fatalities: 803

- Non-occupant fatalities (sum of pedestrians, cyclists, other): 7,079

I'm not going to attempt a detailed analysis, but certainly we can get the broad brush strokes from this data. I come up with the following three conclusions:

1. Clearly impaired driving and speeding contribute to a large number of fatalities (ballpark twenty thousand, although there are overlaps in the categories). So there is plenty of low hanging fruit to go after if we can create a automated vehicle that is as good as an unimpaired human. But it might be more like a 2x or 3x improvement than a 20x improvement. Consider the typical 100 million mile between fatality number that is quoted for human drivers. If you remove the impaired drivers, based on this data you get more like about 200 million miles between fatalities. It will take a lot to get automated cars that good. Don't get me wrong; a 2x or so improvement is potentially a lot of lives saved. But it's not zero fatalities, and it's nowhere near a 94% reduction.

2. Almost one-fifth of the fatalities are pedestrians, cyclists, and other at-risk road users. Detecting and avoiding that type of crash is notoriously difficult for autonomous vehicle technology. Occupants have all sorts of crash survivability features. Pedestrians -- not so much. Ensuring non-occupant safety has to be a high priority if we're going to deploy this technology and avoid an unintended consequence of a rise in pedestrian fatalities potentially offsetting gains in occupant safety.

3. Well over a quarter of the vehicle occupant fatalities are attributed to not wearing seat belts. Older generations have lived through the various technological attempts to enforce seat belt use. (Remember motorized seat belts?) The main takeaway has been that some people will go to extreme lengths to avoid wearing seat belts. It's difficult to see how automated car technology alone will change that. (Yes, if there are zero crashes the need for seat belts is reduced, but we're not going to get there any time soon. It seems more likely that seat belts will play a key part in reducing fatalities when coupled with hopefully less violent and less frequent crashes.)

So where does that leave us?

There is plenty of potential for automated cars to help with safety. But the low hanging fruit is more likely cutting fatalities perhaps in half if we can achieve parity with an average well behaved human driver. The 94% number so often quoted will take a lot more than that. Over time, hopefully, automated cars can continue to improve further. ADAS features such as automatic emergency braking can likely help too. And for now, even getting rid of half the traffic deaths is well worth doing, especially if we make sure to consider pedestrian safety.

Driving safely is a complex task for man or machine. It would be a shame if a hype roller coaster ride triggers disillusionment with technology that can in the end improve safety. Let's set reasonable expectations so that automated car technology is asked to provide near term benefits that it can actually deliver.

Update: Mitch Turk pointed out another study from 2016 that is interesting.

http://www.pnas.org/content/pnas/113/10/2636.full.pdf

This has data from monitoring drivers who experienced crashes. The ground rules for the experiment were a little different, but it has data explaining what was going on in the vehicle before a crash. A significant finding is that driver distraction is an issue. (Note that this data is several years later than the previous study, so that makes some sense.)

For our purposes an interesting finding is that 12.3% of crashes were NOT distracted/NOT Impaired/NOT Human Error:

Beyond that, it seems likely that some of the other categories contain scenarios that could be difficult for an AV, such as undistracted, unimpaired errors (16.5%).

Update 7/18/2018: Laura Fraade-Blanar from RAND send me a nice paper from 2014 on exactly this topic:

https://web.archive.org/web/20181121213923/https://www.casact.org/pubs/forum/14fforum/CAS%20AVTF_Restated_NMVCCS.pdf

The study looked at the NMVCSS data from 2008 and asked the question of what this data means for autonomy, accounting for issues that autonomy is going to have trouble addressing. They say that "49% of accidents contain at least one limiting factor that could disable the [autonomy] technology or reduce its effectiveness." They also point out that autonomy can create new risks not present in manually driven vehicles.

So this data suggests that eliminating perhaps half of autonomous vehicle crashes is a more realistic goal.

NOTES:

For an update on how many organizations are misusing this statistic, see:

https://usa.streetsblog.org/2020/10/14/the-94-solution-we-need-to-understand-the-causes-of-crashes/

The studies referenced, as with other similar studies, don’t attempt to identify mishaps that might have been caused by computer-based system malfunction. In studies like this, potential computer-based faults and non-reproducible faults in general are commonly attributed to driver error unless there is compelling evidence to the contrary. So the human error numbers must be taken with a grain of salt. However, this doesn’t change the general nature of the argument being made here.

The data supporting the conclusion is more than 10 years old. So it would be no surprise if ADAS technology has been changing things. In any event, looking into current safety expectations should arguably require separating the effects of ADAS systems such as automatic emergency braking (AEB) from functions such as lane keeping and speed control. ADAS can potentially improve safety even for well behaved human drivers.

Some readers will want to argue for a more aggressive safety target than 2x to 3x safer than average humans. I'm not saying that 2x or 3x is an acceptable safety target -- that's a whole different discussion. What I'm saying is that is a much more likely near term success target than the 94% number tossed around.

There will no doubt be different takes on how to interpret data from these and other reports. That’s a vitally important discussion to have. But the point of this essay is not to argue how safe is safe enough. Rather, the point is to have a discussion about realistic expectations based on data and engineering, not hype. So if you want to argue a different outcome than I propose, that's great. But please bring data instead of marketing claims to the discussion. Thanks.

https://usa.streetsblog.org/2020/10/14/the-94-solution-we-need-to-understand-the-causes-of-crashes/

The studies referenced, as with other similar studies, don’t attempt to identify mishaps that might have been caused by computer-based system malfunction. In studies like this, potential computer-based faults and non-reproducible faults in general are commonly attributed to driver error unless there is compelling evidence to the contrary. So the human error numbers must be taken with a grain of salt. However, this doesn’t change the general nature of the argument being made here.

The data supporting the conclusion is more than 10 years old. So it would be no surprise if ADAS technology has been changing things. In any event, looking into current safety expectations should arguably require separating the effects of ADAS systems such as automatic emergency braking (AEB) from functions such as lane keeping and speed control. ADAS can potentially improve safety even for well behaved human drivers.

Some readers will want to argue for a more aggressive safety target than 2x to 3x safer than average humans. I'm not saying that 2x or 3x is an acceptable safety target -- that's a whole different discussion. What I'm saying is that is a much more likely near term success target than the 94% number tossed around.

There will no doubt be different takes on how to interpret data from these and other reports. That’s a vitally important discussion to have. But the point of this essay is not to argue how safe is safe enough. Rather, the point is to have a discussion about realistic expectations based on data and engineering, not hype. So if you want to argue a different outcome than I propose, that's great. But please bring data instead of marketing claims to the discussion. Thanks.

No comments:

Post a Comment

All comments are moderated by a human. While it is always nice to see "I like this" comments, only comments that contribute substantively to the discussion will be approved for posting.