Recent news has people questioning whether autonomous vehicles are viable. Promises that victory is right around the corner are too optimistic. But it's far too early to declare defeat. There is a lot to process. But a pressing technology roadmap question is: if robotaxis aren't really the answer, what happens next for passenger vehicles?

A robotoxi ride that did not go as expected. (Total Recall 1990)

We can expect OEMs to double down on their Level 2è2+è3 strategies. But there is a very real risk of a race to the bottom as companies scramble to deploy shiny automation technology while skipping over reasonable, industry-created safety practices. A subtle, yet crucial, point is that asking a driver to supervise a driver assistance system is a completely different situation than putting a civilian car owner in the position of being an untrained autonomous vehicle test driver.

Where are we now?

The recent demise of Argo AI has made it crystal clear that Big Auto is pivoting away from robotaxis. Big Auto has kept plugging away at driver assistance systems while hedging its bets by taking stakes in Level 4 robotaxi companies. Those Level 4 companies were carefully kept at arms length against the day it was time to close out those hedges. And here we are.

Other companies continue to work on robotaxis -- most notably Waymo, Cruise, and some players in China that are carrying passengers on public roads. Waymo seems unconstrained by runway length. Presumably Cruise has a year-end milestone as they did in 2020 and 2021, and we'll see how that goes. Meanwhile Tesla continues to celebrate anniversaries of its promise to put a million robotaxis on the road within a year. Heavy trucks, parcel delivery, and low speed shuttles are also part of the mix, but face their own challenges that are beyond the scope of this discussion.

For now let's entertain the possibility that robotaxis are not going to happen anytime soon. It seems likely to take years of hammering away at the heavy tail of edge cases to get there. Sure, some of us can get a demo ride in a Taxi of Tomorrow in a couple cities. But let's assume that robotaxis are more of a Disney-esque experience than a practical tool for profitable mobility at scale anytime soon.

What happens next?

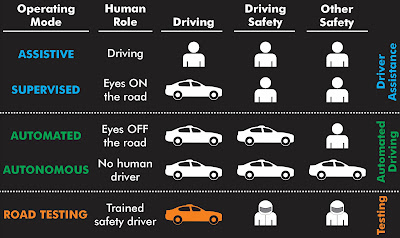

We can expect to see OEMs double down on evolutionary strategies. Ford has pretty much said as much, promising a Level 3 system is on the way. It will probably be talked about as climbing up the SAE Levels from 2 to 3 to 4, which has been their narrative all along. That narrative has significant issues because it makes the common mistake of using SAE levels as a progressive ladder to climb -- which it is definitely not. But it will be the messaging framework nonetheless.

In the mix are several options:

- Level 2 highway cruising systems that are already on the road

- Level 2+ add-ons, but with the driver still responsible for safety

- Traffic jam pilot as the first step to drivers taking attention off the road

- Level 3/4 capabilities beyond traffic jams (harder than it might seem)

- Abuse of Level 2/2+ designations to evade regulation

We'll see all of these in play. Each option comes with its own challenges.

Level 2 systems: highway cruising

The general idea is that first you build an SAE Level 2 system that has a speed+steering cruise control capability (automated speed control and automated lane keeping). This is intended for use on highways and other situations involving well behaved, in-lane travel. This functionality is already widely available on new high-end vehicles. The driver is responsible for continuously monitoring the car's performance and the road conditions, and intervening instantly if necessary -- whether the car asks for intervention or not.

A significant challenge for these systems is ensuring that drivers remain attentive in spite of inevitable automation complacency. This requires a highly capable driver monitoring system (DMS) that, for example, tracks where the driver is looking to ensure constant monitoring of the situation on the roadway.

Performance standards for monitoring driver attentiveness are in their infancy, and capabilities of the DMS on different vehicles varies dramatically. It is pretty clear that a camera-based system is much more effective than steering wheel touch sensors. And a camera that can see the driver's eye gaze direction, including having infrared emitters for night operation, is likely to be more effective than one that can't.

Another crucial safety feature that should be implemented is restricting operation of Level 2 features to the conditions they are designed to be safe in. The SAE J3016 taxonomy standard makes this totally optional -- which is one of the many reasons J3016 is not a safety standard, and why no government should write the SAE Levels into their safety regulations. But if the automation is supposed to only be used on divided highways with no cross-streets, then it should refuse to engage unless that is the road type it is being used on. Some companies restrict their vehicle to operation on specific roads, but others do not.

NTSB has made a number of recommendations to improve DMS capability and related Level 2 functionality, but the industry is still working on getting there.

Vehicle automation proponents have been beating the drum of potential safety benefit for years -- to the point that many take safety benefits as an article of faith. However, the reality is that there is no credible evidence that Level 2 capabilities make systems safer. There is plenty of propaganda to be sure, but much of that is based on questionable comparisons of very capable high-end vehicles with AEB and five-star crash test results vs. fleet-average 12 year old cars without all that fancy safety technology. Comparisons also tend to involve apples-meets-oranges different operational scenarios. The results are essentially meaningless, and simply provide grist for the hype machine.

At best, from available information, Level 2 systems are a safety-neutral convenience feature. At worst, AEB is compensating for a moderate overall safety loss from Level 2 features, contributing to an ever-increasing number of fatalities that might be associated with disturbing patterns such as crashing into emergency responders. However, nobody really knows the extent of any problems because of extreme resistance to sharing data by car companies that would reveal the true safety situation. (NHTSA has mandated crash reporting for some crashes, but there is no denominator of miles driven, so crash rates cannot be determined in a reliable way using publicly available data.)

If the safety benefits were really there, one imagines that these companies full of PhDs who know how to write research papers would be falling all over themselves to publish rigorously stated safety analyses. But all we're seeing is marketing puffery, so we should assume the safety benefit is not yet there (or at least potential safety benefits are not yet supported by the actual data).

In the face of these questions about automation safety and pervasive industry safety opacity, the industry's plan is to ... add even more automation.

Level 2+ systems

"Level 2+" is a totally made up marketing term. The SAE J3016 standard says this term is invalid. But it gets used anyway so let's go with it and try to see what it might mean in practice

Once you have a vehicle that stays in its lane and avoids most other vehicles, companies feel competitive pressure to add more capability. Features such as lane changing, automatically merging into traffic, and automatically detecting traffic lights might help drivers with workload. And sure, that sounds nice if it can be done safely.

The question is what happens to driver attention as automation features get added and automation performance improves. There is plenty of reason to believe that as the driver perceives automation quality is improving, they will struggle to remain engaged with vehicle operation. They will succumb to driver complacency. The better the automation, the worse the problem, as illustrated by this conceptual graph:

A significant complication is that the driver has to monitor not only the road conditions, but also the car's behavior to determine if the car will do the right thing or not. This can be pretty tricky, especially if it is difficult to know if the car "sees" something happening on the road or not. What if there is a firetruck stopped on the highway in front of you? Will the car detect and stop, or run right into it? How long do you wait to find out? What if you wait just a little too long and suffer a crash? Monitoring automation behavior can be tricky, and is a much different task than simply driving. Assuming that a competent driver is also a competent monitor is a bit of a leap of faith. As you add more complex automation behavior, the driver's ability to track what is and is not supposed to be handled and compare that to what the vehicle is doing can easily be overwhelming.

Monitoring cross-traffic can be especially difficult. How can the supervising driver know their car is about to accelerate out across traffic to make a left turn when there is an oncoming car? Pressing the brake quickly after the car has already started lunging into traffic as a safety supervising driver is an entirely different safety proposition than waiting for a driver to command speed when the road is clear. The human/computer interface implications here are tricky.

Car companies will continue to pile on more automation features. At least some of them will continue to improve DMS capability. There are many human/machine interface issues here to resolve, and the outcome will in large part depend on non-linear effects related to often the human driver needs to intervene quickly and correctly to ensure safety. How that will work out in real world conditions remains to be seen.

The moral hazard of blaming the driver

A significant issue with Level 2 and 2+ systems is that the driver is responsible for safety, but might not be put into a position to succeed. There are natural limits to human performance when supervising automation, and we know that it is difficult for humans to do well at this task. We should not ask human drivers to display superhuman capabilities, then blame them for being mere mortals. Driver monitoring might help, but we should respect the limits to how much it can help.

There is a temptation to blame the driver for any crash involving automation technology. However, doing so is counterproductive. A crash is a crash. Blame does not change the fact that a crash happened. Blame is a fairly ineffective deterrent to other drivers slacking off in general, and is wholly ineffective at converting normal humans into super-humans. If using a Level 2 or 2+ system results in a net increase in crashes, blaming the drivers won't bring the increased fatalities back to life -- even if we bring criminal charges.

If real-world crashes increase with the use of driver automation (compared to a comparably equipped vehicle in the same conditions without driver automation), they should be considered unreasonably dangerous. Something would need to be done about such systems to change the system, human behavior, or both. Changing human behavior via "education" is usually what is attempted, and almost never works. The human/computer interface and the feature set are much more likely what need to change.

As a simple example of how this might play out, let's say a car company runs TV advertisements showing drivers singing and clapping hands while engaging a Level 2/2+ system. (Dialing back the #autonowashing, later ads show only the passengers doing this.) The company should have data showing that even if a worst case driving automation error for their system takes place mid-clap, the driver will be attentive enough to the situation (despite being caught up in the song) and have a quick enough reaction time to get their hands back onto the steering wheel and intervene for safety. Blaming the driver for clapping hands after airing a TV commercial amounts should not be a permissible tactic.

Note that we are not saying hands-off is inherently unsafe, but rather that permitting (even encouraging) hands-off operation significantly increases the challenge of ensuring practical safety. The data needs to be there to justify safety for whatever operational concept is being deployed and encouraged.

ALKS: a baby Step Toward Level 3

The way the term "Level 3" is being used by almost everyone seldom matches what the SAE J3016 standard actually says. (See Myths #6, #7, #8. #14, #15, etc in this J3016 analysis.) But this is not the place to hash out that incredibly messy situation other than to note that SAE J3016 terminology is not how we should be describing these safety critical driving features at all.

For our purposes, let's assume when a car maker says "Level 3" they mean that the driver can take their eyes off the road, at least for a little bit, so long as they are ready to jump back into the role of driving if the car sounds an alarm for them to do so.

The industry's baby step toward this vision of Level 3 is Automated Lane Keeping Systems (ALKS) as described by the European standard UNECE Reg. 157. (To its credit, this standard does not mention SAE Levels at all.) The short version is that drivers can take their eyes off the road and let the car do the driving in slow speed situations. In general, this is envisioned as a traffic jam assistant (slow speed, stop-and-go, traffic jam situations). We can expect this to be the first Level 3 step in an envisioned transition from Level 2 to 2+ to 3 strategy that is already said to be deployed at small scale in Europe and Japan.

ALKS might work out well. Traffic jams on freeways are pretty straightforward compared to a lot of other operational scenarios. Superficially there are a lot of slow moving cars and not much else. Going just a bit deeper, if the jam is due to a crash there will be road debris, emergency responders, and potentially dazed victims walking through traffic. So saying "no pedestrians" or even "pedestrians will be well behaved" is unrealistic near a crash scene. But one can envision this can be managed, so it seems a reasonable first step so long as safety is considered carefully.

Broader Level 3 safety requirements

Going beyond the strict workings of SAE J3016 (which, if followed to the letter and not exceeded, will almost certainly result in an unsafe vehicle), Level 3 driving safety only works if:

- The Automated Driving System (ADS) is 100% responsible for safety the entire time it is engaged. Period. Any weasel wording "but the driver should..." will lead to Moral Crumple Zone designs (blaming people for automation failing to operate as advertised). Put another way, if the driver is ever blamed for a crash when a Level 3 automation feature is engaged, it wasn't really Level 3.

- The ADS needs to determine with essentially 100% reliability when it is time for the driver to intervene. This is one aspect of the most difficult problem in autonomous vehicle design: having the automation know when it doesn't know what to do. The magnitude of this challenge should not be underestimated. Safe Level 3 is not just a little harder than Level 2. The difference is night and day in any but the most benign operational conditions. Machine learning is terrible at handling unknowns (things it hasn't trained on), but recognizing something is an unknown is required to make sure the driver intervenes when the ADS cannot handle the situation.

- The ADS ensures reasonable safety until the driver has time to respond, despite the fact that something has gone wrong (or is about to go wrong) to prompt the takeover request. This means the ADS needs to keep the car safe at least for a while. If the driver takes a long time to respond, the ADS needs to do something reasonable. In some cases perhaps an in-lane stop is good enough; in others not. (In practice this pushes the ADS arguably to be a very low-end Level 4 system, but we're back to J3016 standards gritty details so let's not even go there. The point is that the driver might take a long time to respond, and the ADS can't simply dump blame on the driver if a crash happens when the going gets tough.)

For ALKS, the main safety plan is to go slowly enough that the car can stop before it is likely to hit anything. That "anything" is predominantly other cars, and sometimes people at an emergency scene. High speed animal encounters, cross traffic, and other situations are ruled out by the narrowly limited operational design domain (ODD), which should be enforced by prohibiting activation in inappropriate situations. The ALKS standard presumes drivers will respond to takeover notifications in 10 seconds, but the vehicle has to do something reasonable even if that does not happen.

More generally, it is OK to take credit for most drivers being able to respond relatively quickly in most situations. It is likely that a more advanced Level 3 system will progressively degrade its operation over a period of many seconds, such as first slowing down, then coming to a stop in the safest place it can reach given the situation. If a driver falls asleep from boredom, it might take a while for honking horns from other drivers wake them up. Or it might take longer than 10 seconds to regain situational awareness if overly engrossed in a movie they were told was OK to watch while in this operational mode. Human subject studies can be used to claim credit for most drivers intervening relatively quickly (to the degree that is true) even if all drivers cannot respond that quickly. Credit could be taken for being able to pull over to the side of the road in most situations to reduce the risk of being hit by other vehicles, even if that is not possible in all situations. Safety for human driver takeover is not the result of a single ten second threshold, but rather a stack-up of various levels of degraded operation and probabilities of mishaps in a variety of real-world scenarios.

One might be able to argue net acceptable safety as long as the worst cases are infrequent. If the worst cases happen too often -- including inevitable misuse and abuse -- some sort of redesign will be needed. Again, blaming drivers or "educating" them won't fix a fundamentally unsafe operational concept. Safety must be built into the system, not dodged by blaming drivers for having an unacceptably high crash rate.

Advanced features and test platforms

Once ALKS is in place, the inevitable story is going to be to slowly expand the ODD. For example, if things seem safe, increase the speed at which ALKS can operate. Then let it do on/off ramps. And so on.

The same thinking will be in place for Level 2+ systems. If in-lane cruise control works, add lane changing. Add traffic merging. Let it operate in suburbs. Add unprotected left turns. Next thing you know, we'll be back to saying robotaxis will be here next year -- this time for sure!

While this seductive story sounds promising, it's not going to be easy. Automation complacency combined with limits of drivers to supervise potentially unpredictable autonomy functions will be a first issue to overcome. The second will be confusing a Level 2 driver with an autonomous vehicle testbed driver.

We've already discussed automation complacency. However, it is going to get dramatically worse with more advanced functionality. Humans learn to trust automation far too quickly. If a car correctly makes an unprotected left turn 100 times in a row, any safety driver might well be mentally unprepared to intervene in time when it pulls out in front of oncoming traffic on the 101st turn. Even something as simple as a automation being able to see moving cars but not detecting an overturned truck on the road has led to crashes, presumably due to automation complacency.

While car companies can argue that trained, professional safety drivers might keep testing acceptably safe (e.g., by conforming to the SAE J3018 safety standard -- which mostly is not being done), that does not apply to retail customers who are driving vehicles.

Tesla has led the industry in some good ways by promoting the adoption of electric vehicles. But it has set a terrible precedent by also adopting a policy of having untrained civilian vehicle owners operate as testers for immature automation technology, leading to reckless driving on public roads.

There is already significant market pressure for other companies to follow the Tesla playbook by pushing out immature automation features to their vehicles and telling drivers that they are responsible for ensuring safety. After all, if Tesla can get away with it, only suffering the occasional wrist slap for egregious rule violations, is it not the case that other companies have a duty to their stockholders to do the same so as to compete on a level playing field? As long as we (the public) allow companies to get away with blaming drivers for crashes instead of pinning responsibility on auto makers for deploying poorly executed automation features, reckless road testing (for example, running red lights) will continue to proliferate.

While it is difficult to draw a line in the sand, we can propose one. Any vehicle that can make turns at intersections with no driver control input is not a Level 2 system -- it is an autonomous vehicle prototype. There is an argument supporting this based on the evolution of J3016. (See page 242 of this paper.) But more importantly, supervising turns in traffic is clearly of a different nature than supervising cruise control on a highway. It takes much more attention and awareness of what the vehicle is about to do to prevent a tragic crash. Especially if you are busy clapping hands to a rock song.

Abusing Level 2/2+ to evade regulation

Another trail blazed by Tesla is letting regulators think they are deploying unregulated Level 2/2+ systems when they are really putting Level 3/4 testbeds on the roads in the hands of ordinary vehicle owners.

Companies should not call something Level 2+ when it is really a testbed for Level 3 features. Deployed Level 2+ features must be production ready instead of experimental, and should never put human drivers in the role of being an unqualified "tester" of safety critical functionality. Among other things, the autonowashing label of "beta test" that implies drivers are responsible for crashes due to automation malfunctions should be banned.

The details are subtle here: if a vehicle does something dangerous that is not reasonably expected by an ordinary driver, that should be considered a software malfunction. Any dangerous behavior that cannot be compensated for by an ordinary driver should not happen -- regardless of whether the driver has been told to expect it or not. Products sold to retail customers should not malfunction, nor should they exhibit unreasonably dangerous behaviors. Telling the driver they are responsible for safety should not change the situation.

Software should either work properly or be limited to trained testers operating under a Safety Management System. Rollout of Level 2+ and Level 3 features can happen in parallel, and that's fine. But a prototype Level 3 feature that can malfunction is not in any way the same as a mature Level 2+ feature -- even if they superficially behave the same way. It's not about the intended behavior, it's about having potentially very ill-behaved malfunctions. A Level 2+ system might not "see" a weird object in the road and perhaps the driver should be able to handle such a situation if they have been told that is an expected behavior. But any system that suddenly swerves the vehicle left into oncoming traffic is not fit for use by everyday drivers. A suddenly left-swerving vehicle is not Level 2/2+ -- it is a malfunctioning prototype vehicle that should only be operated by trained test drivers regardless of the claimed automation level.

Over time we'll see car companies aggressively try to get features to market. We can expect repeated iterations of arguments that the next incremental feature is no big deal for a driver to supervise. But if that feature comes with cautions that drivers must pay special attention beyond what a driver monitoring system supports, or incur extra responsibility, that should be a red flag that we're looking at asking them to be testers rather than drivers. Warnings that the vehicle "may do the wrong thing at the worst time" are unambiguously a problem. Such testing should be strictly forbidden for deployment to ordinary drivers.

Telling someone that a product is likely to have defects (that's what "beta" means these days, right?) means that they are being sold a likely-defective product. As such, they should not be distributed as retail products to everyday customers, regardless of click-through disclaimers. After all, any unqualified "tester" is not just placing themselves at risk, but other road users as well.

For those who say that this will impede progress, see my discussion of the myths propagated by the AV industry in support of avoiding safety regulation. Following basic road testing safety principles should be a part of any development process. Anything else is irresponsible and, in the long run, will hurt the industry more than it helps by generating bad press and accompanying ill will.

Civilian drivers should never be held responsible for malfunctioning technology by being used as Moral Crumple Zones. That especially includes mischaracterizing Level 3 (and above) prototype features as Level 2+ systems.

The bottom line

Perhaps NHTSA will wake up and put a regulatory framework in place to help the industry succeed at autonomous vehicle deployment safety. (They already have proposed a plan, but it is in suspended animation.) Even if the new NHTSA framework proceeds, regulations and proposals from both NHTSA and the states address levels 3-5, while leaving a situation ripe for Level 2+ abuse. Until that changes, expect to see companies pushing the envelope on Level 2+ as they compete for dominance in the vehicle automation market.